The Hidden Risks of Your New AI Assistant

- AUGUST 25TH, 2025

- 2min read

Introduction

AI chatbots and large language models (LLMs) like ChatGPT, Claude, and Gemini have become powerful tools for boosting productivity. However, this new technology has created a major security blindspot. Without realising it, employees can expose confidential company data and create new vulnerabilities that cybercriminals are actively exploiting. This advisory highlights the unseen risks and gives you the tools to use these AI assistants safely and securely.

The Critical Threat: How AI Chatbots Can Be Exploited

Attackers are targeting AI chatbots in new ways that can compromise your organisation:

-

Data Leakage: When you input sensitive data, like proprietary code, customer lists, or internal strategies, into a public chatbot, that information can be used to train the model. This means your confidential data could be inadvertently exposed to other users or stored in a third-party service outside of your company’s control.

-

Prompt Injection: This is a new type of attack where a hacker tricks an AI chatbot into ignoring its safety rules. A malicious prompt can be disguised to make the chatbot leak private information, perform unauthorised actions, or even reveal its own internal code.

-

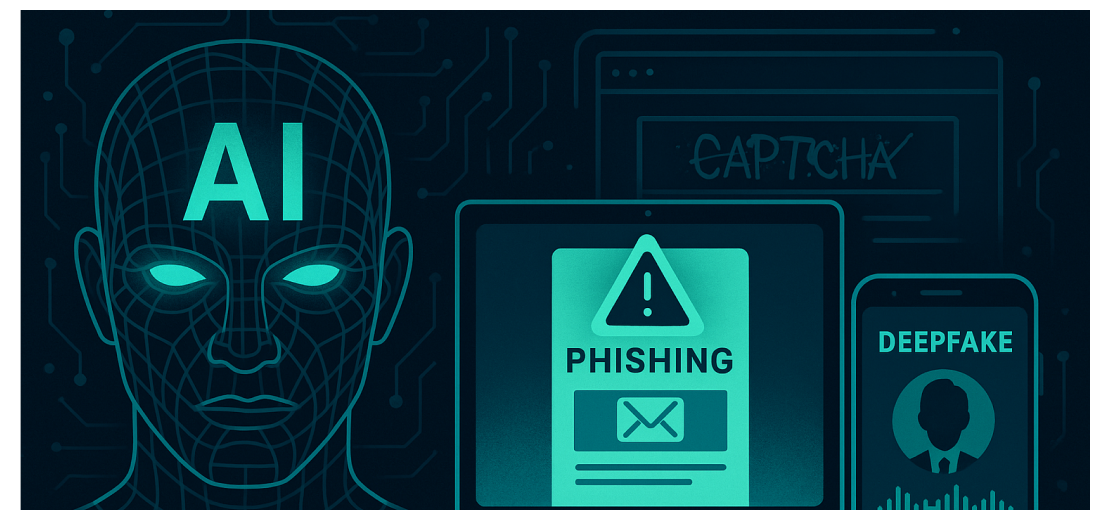

Sophisticated Phishing: Attackers use these tools to create highly convincing, grammatically perfect phishing emails and messages. This makes it significantly harder to spot a fake and can lead to more successful attacks, especially when paired with other forms of social engineering.

Real-World Incidents Show the Danger is Real

-

Samsung Breach: In a widely-reported incident, engineers at Samsung accidentally uploaded confidential source code into a public AI chatbot, exposing proprietary company data. Samsung has since restricted employee use of these tools.

-

Researcher Findings: Security researchers have demonstrated how easily chatbots can be “jailbroken” to bypass safety protocols and provide instructions for harmful activities, proving that the technology’s defenses are not foolproof.

Urgent Actions Required: Use AI Securely

Follow these guidelines to protect company data when using AI chatbots:

-

Treat All Chatbots as Public: Never input any data into a public chatbot you wouldn’t share on a public website.

-

Use Sanctioned Tools Only: Only use AI tools approved by the company, which often have stronger security, privacy protections, and data usage policies that protect your information.

-

Be Aware of Prompt Injection: Be skeptical of chatbots that seem to be acting strangely or providing unusual responses. If you encounter something odd, stop the conversation and report it.

-

Verify Information Independently: AI chatbots can “hallucinate” or provide inaccurate information. Always verify any critical information or code with a trusted source before using it.

Conclusion: Stay Vigilant

AI is a powerful tool, but it’s not a secure vault. By understanding these new risks and using tools with caution, you can use AI to your advantage without compromising security.

Explore more CIL Advisories

Review Bombing Attacks and Extortion

IntroductionMalicious actors use "review-bombing", a coordinated flood of fake, one-star reviews as an initial step for extortion. This high volume…

NOVEMBER 26TH, 2025

Read More

Synthetic Phishing: AI-Enabled Insider Impersonation

IntroductionThreat actors increasingly use artificial intelligence (AI) to impersonate trusted individuals such as executives, employees, or suppliers within organisations. These…

NOVEMBER 24TH, 2025

Read More

The Silent Security Threat: Data Hoarding

IntroductionThe greatest risk to your organization may be the sheer volume of data we hold, a practice known as Data…

NOVEMBER 19TH, 2025

Read MoreNever miss a CIL Security Advisory

Stay informed with the latest security updates and insights from CIL.